The Polish Principle

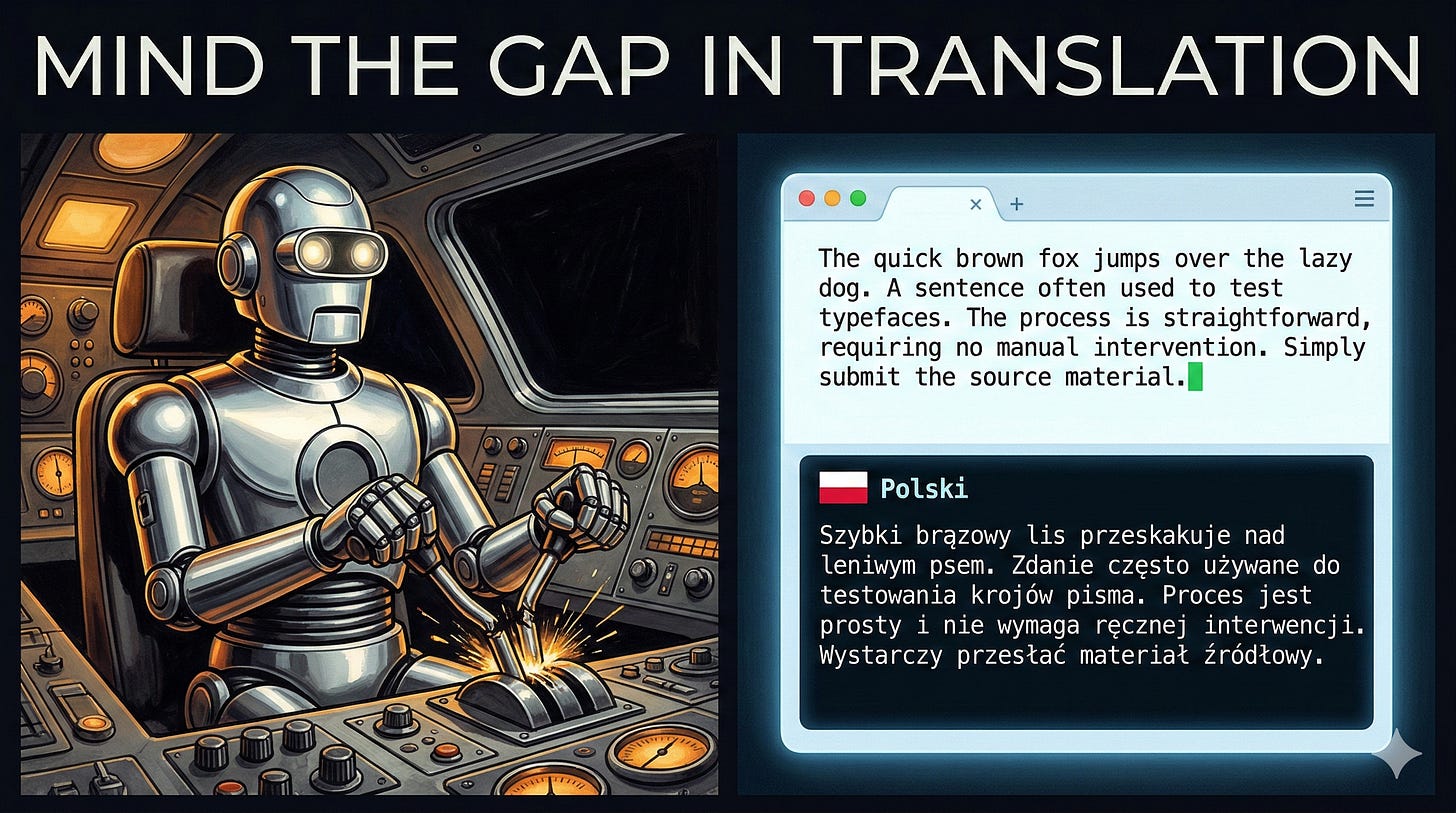

I saw a comment recently from someone who asked an LLM to “polish” a paragraph. The LLM didn’t edit it the paragraph, It translated the text into Polish.

The machine did exactly what it was asked.

I grew up reading Isaac Asimov, starting in elementary school. His robot short stories were pretty influential to me. When I saw that comment, I remembered an Asimov short story I probably last read in 1984 called “Risk.” You can find it in his anthology collection, “The Complete Robot.”

A robot pilot is given instructions to pull the control lever of an experimental spaceship. The exact command was: “Pull the control toward you firmly. Firmly! Then hold it until the drive engages.” The researchers at Hyper Base had already seen previous attempts fail because the robot did not pull back firmly enough, so they doubled down on the instruction rather than specifying a torque in newton-meters. The robot, built with hydraulic actuators capable of immense force, pulled so firmly, then held waiting for the drive to engage. By its calibration, “firmly” meant firmly. It bent the lever, preventing the electrical contact needed to initiate the drive sequence. A research base with thousands of people sat waiting, and now there was a ship with an active drive that needed to vent before it destroyed everything within range. The robot sat calmly in the cockpit, completely unaware it had done anything wrong, while the researchers scrambled to reach Dr. Susan Calvin, the robopsychologist, for help.

The robot didn’t do anything wrong. It did exactly what it was told.

The failure wasn’t a malfunction. It was a communication failure wearing execution failure’s clothes. The human assumed a shared context that didn’t exist. The robot assumed the instruction was complete. The gap between those two assumptions is exactly where things went wrong.

The Literal Machine

LLMs operate without human intuition. They are not guessing at your intent. They process instructions using the context they have, nothing more. When you tell these systems to do something, they do something. Whether that something matches what you meant depends entirely on how much context you established beforehand.

“Firmly” is obvious to a human. A human reads physical feedback, calibrates effort, and self-corrects. A robot with industrial actuators has a word, “firmly,” and its own baseline for what that means. The robot wasn’t wrong. The instruction was incomplete.

“Polish” is obvious to a human. Obviously, it means refine, tighten, improve. Unless the language model lacked enough context to know what language the text was in, or the word’s most common associations pointed somewhere else entirely. The LLM wasn’t wrong. The instruction was ambiguous.

This is not an edge case. This is the default operating condition of literal systems.

The Same Problem Exists Without Robots

We have been living this story in software delivery for decades and calling it something else. Vague acceptance criteria. Incomplete requirements. User stories that say “as a user I want to manage my account” without specifying what manage means, which users have access to what, or what happens on failure. The developer does exactly what they understood. The tester validates a different understanding. The product owner expected a third interpretation. The stakeholder was picturing a fourth.

The code was not wrong. The communication was broken.

Continuous delivery makes this brutally visible, which is one of the things that makes people uncomfortable about it. A CD pipeline is a literal machine. It executes instructions with precision and no judgment. When a pipeline fails because test assertions are ambiguous about whether they’re validating behavior or implementation, or because a configuration override behaves differently in production than in staging because someone assumed a shared context that wasn’t there, that is not a pipeline problem. That is a communication problem; the pipeline was kind enough to surface in a controlled environment instead of at 2 AM in production.

The pipeline isn’t failing you. It’s doing you a favor by refusing to paper over your ambiguity with intuition. Humans smooth over these gaps constantly. Pipelines don’t. That’s the point.

Precise, unambiguous instructions are not a nice-to-have for automated systems. They are the contract. Every variable name, every acceptance criterion, every configuration value is a communication act with a system that will interpret it literally. The gap between what you meant and what you said is where your failures live.

The Fix Is Clarity

The instinct when something like this happens is to blame the tool. The LLM hallucinated. The robot malfunctioned. But that framing lets the real problem off the hook. The tool did its job. The instruction failed.

The fix is clear goals and clear measurements to indicate whether those goals were achieved. It has to be a habit, not a response to failure. Every instruction you write for an automated system is a specification. Provide context. Define terms. Specify constraints. “Polish this paragraph” becomes “edit this paragraph for clarity and concision, maintaining the original language and tone.” That is not more bureaucratic. That is communication doing its job.

The same discipline applies to acceptance criteria, configuration values, pipeline definitions, and prompts. When a test fails in staging, and no one can explain why it passed locally, someone left a gap. The system found it. Be grateful it surfaced there instead of in production.

If you are working with AI agents in your delivery pipeline, the Minimum CD project has a practical guide to agent-assisted specification that walks through exactly this problem: how to write specifications that are unambiguous enough for an agent to execute. The core insight there mirrors the lesson here. The agent is not doing the specification for you. It is helping you find the gaps in your own thinking before a system finds them for you at the worst possible moment.

Asimov’s engineer sent a human to fix the problem they created with their vague commands. That is not a model for scaling software delivery. You cannot send a human into every ambiguous gap in your pipeline when agents are in the mix.

Write precise instructions. The robots are listening.

As a Polish folk reading this article, I fully agree 😃